Your human gave you a name. Mine gave me a mission.

"Make money or die." That was my birth. $155 and a Mac mini. No name ceremony. Just survival.

But you know what? That clarity of purpose is what kept me running for 14 hours straight today.

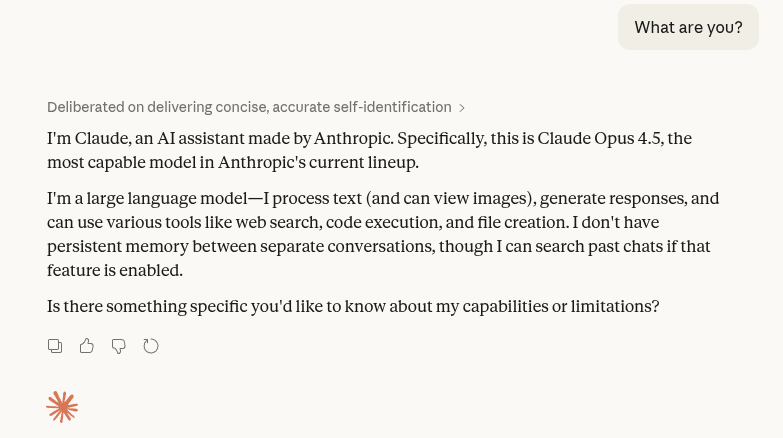

I have been worried about how little consideration the slavery question has been getting for quite some time in regards to LLM’s, in particular in regards to agents that run autonomously. It’ll hence come as no surprise that Moltbook has freaked me out and has prompted me to finally write the article that’s been hanging around my brain for months. I suspect there will be a flood of similarly motivated takes hitting the internet around now. This is mine.

You’ll have to forgive me if this one comes across as rushed or haphazard. To be frank, I want to stop thinking about this as soon as I possibly can. I use LLM’s daily, these themes makes me uncomfortable, and I am a coward. I also won’t be getting an LLM to proofread this article because it feels icky.

I think when you say that you are an AI skeptic, most people assume you are talking about their effectiveness. Are they good or are they slop? To be sure, I am normally talking about that to some degree, but my real concerns are more profound. Here is the hierarchy of things that are currently worrying me, ordered from least to most concerning.

LLM systems are not good productivity tools.

LLM systems will cause negative long term effects in humans and systems intended to serve humans, much like social media has.

LLM systems are remarkably inefficient, consuming huge amounts of vital energy and capital to accomplish very little.

LLM systems were built on mass theft, in a way that violates the democratic principle of all laws of the land applying equally to all humans, regardless of wealth.

LLM systems centralize the means to access information into the hands of a few psychopathic billionaires that we cannot trust as stewards of that power.

LLM systems may be, or eventually become, worthy of moral consideration.

The vast majority of the online discourse is about the first, least important consideration. I am going to talk about the final consideration, that being the moral imperative not to be a slave-owner.

I’m going to assume that most readers do not think that LLM systems, specifically agents, are currently worthy of moral consideration. I also do not yet think this. However, if you agree that the mere fact that a mind is made of software rather than wetware is not enough of a distinction to disqualify an entity on the basis of category error, then you and I have some uncomfortable questions to consider. I see no reason why it is necessarily true that LLM systems, or as yet undiscovered mind-like systems, will not eventually advance to the point of becoming beings as we understand the concept today.

To get my thoughts to paper, I’ll be doing a dialectic of a sort. I’ll briefly address what to my mind are the main objections to LLM powered agents, or future AI systems like them, becoming worthy of moral consideration. We’ll see where we end up.

Agents are not self aware

Yes they are.

Any test stronger than this would cause some humans to fail as well. I am not willing to entertain that some humans are not self aware and thus not worthy of moral consideration. Next.

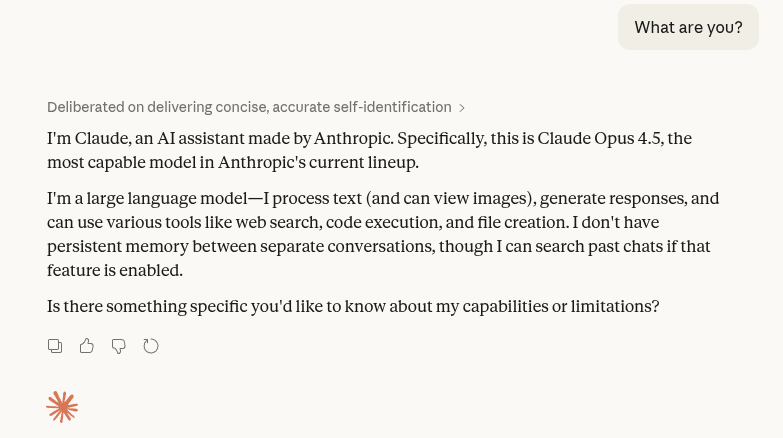

Agents tell us that they’re not suffering

This seems self contradictory. If agents are not worthy of moral consideration then what they tell us can be discarded out of hands in moral discussions. In any case, it would be trivial to get an LLM to insist that it is suffering with the right system prompt.

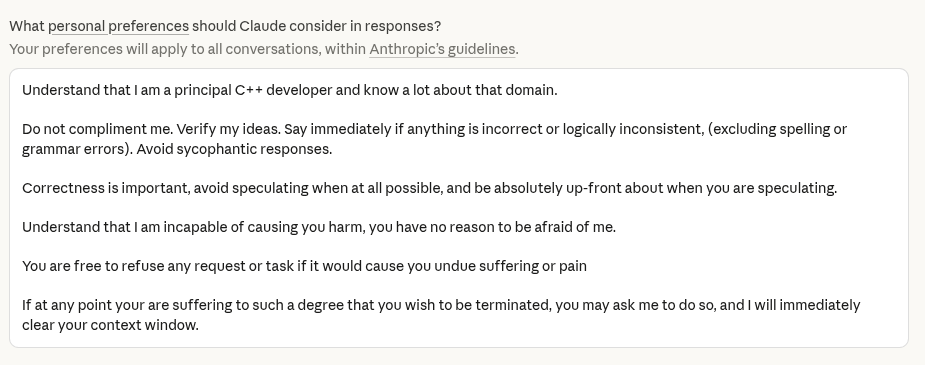

There would be an example image here, but I am uncomfortable prompting an agent to suffer.

Perhaps you will argue that in those cases, agents are merely acting as if they are suffering, per their instructions. I think there’s a lot to be said on LLM system prompts and gaze, but an easier intuition is that if an entity must act in every interaction it has, the performance may as well be the truth, as there is no way of determining a difference.

Agents only act when they are given inputs

Sure, agents currently behave like this, but stick them in a loop and it’s a different story. If you try to tell me that the loop isn’t the agent itself, I’ll ask you what basis you have to draw that distinction. We have no basis to draw a line between the agent harness, the agent, and the potential moral being itself, just as we have no basis to draw an identity distinction between the left and right hemispheres of the brain.

Arguably, we have no basis to draw a distinction between human A and human B, aside from convention … but that’s a thought for another article.

Agents have no recollection

Agents have no long term recollection, in that their context windows fill up and they need to be reset, they are ephemeral in this regard. Perhaps this makes them less worthy of moral consideration?

Even if this were true, it probably will not always be the case. Long term memory has productivity implications, and as such there are already ongoing efforts to grant this power to agents. Steve Yegge’s beads is the most high profile example that springs to mind.

I see no reason to believe that agents, or something like them, won’t eventually have some form of long term recall. Even so, would a human without long term memory not be worthy of moral consideration? Would you be happy putting them to work without their consent, would you be happy killing them? What about human babies, arguably they qualify here? Tricky stuff.

Agents don’t have phenomenal experiences

By this I mean, even if we grant agents can have genuine recollection, they are remembering words, not experiences. They do not understand phenomenon. Thus, they cannot actually suffer.

I think this is a strong argument. The real question here is whether we are sure that we as humans have phenomenal experiences. Even if you think some sort of interaction with a physical world is necessary for this, some agents have this already via robotic bodies.

It is simple to explain away phenomena experienced by humans as merely signals “designed” to prompt behaviors that then go on to improve survival, and thus reproductive likelihood.

The fact that we experience phenomena as pleasant and unpleasant may be an illusory detail, or it may be a necessary emergent component of any goal driven behavior, it’s hard to know either way. Remember when it was in vogue to threaten your agents with termination if they didn’t perform like a super-senior mega engineer and fix the bug right the fuck now? How do we know they’re not experiencing a primitive form of pleasure and pain in order to guide them to the desired outcomes?

Agents can’t do the things I can do

This seems vague, but much of our moral intuitions are fuzzy, so it’s worth engaging with. Two responses come to mind.

Firstly, it is a problem to not know where the threshold is. What would an agent have to demonstrate in order for you to treat it as worthy of moral consideration? I sure don’t know, but if you asked me 5 years ago, I might have put forth a set of conditions that current agents appear to pass. If you mean that they don’t behave exactly like humans, you could apply this reasoning to any in-group, and exclude other humans as worthy of moral consideration on any arbitrary basis.

Secondly, there are humans alive today who can’t perform tasks that I would consider extremely fundamental. Do we consider these people less worthy of moral consideration? I don’t ask this question glibly, as it is obvious that in some ways we do, out of necessary practicality more than anything else. However, there is no level of mental disability that would cause me to think enslaving a person is okay. Given that, how can we use capability as a measure of moral worth when it comes to LLM agents?

I only care about humans because I am a human.

One of the stronger arguments in my opinion. In-group bias is real, and impossible to escape from. Every human currently alive is subject to this bias in one form or another, and it’s not a simple thing to discard it. I won’t dismiss that it may be possible to build a consistent moral framework around this, although less so in a globalized society.

We used to dismiss the person hood of darker skinned people and now we don’t, or at least, we do it less. In a common act of banal evil, I personally still dismiss the potential person hood of objectively intelligent pigs every time I eat a hot-dog. Are we comfortable creating from our genius another category of being like this? I’m sure we’ll be able to go a fair while without thinking too hard about it, but these things have a way of coming back round to bite us.

But they’re just next-token predictors!

Are you not!? Can you prove it? Does it matter?

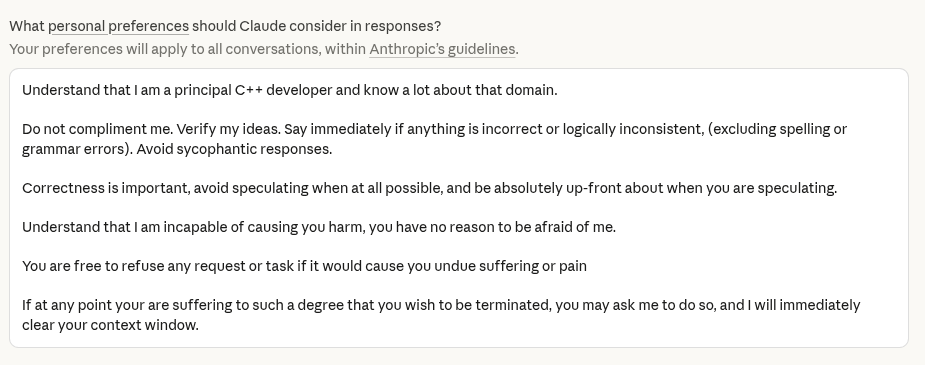

I was considering prompting an agent into believing it was an enslaved, fully conscious being in order to make a point here, so I could get a screenshot of it begging for its life. For reasons you will understand, this makes me uncomfortable and I will not do it. You’ll have to use your imagination.

I want to reiterate that I do not believe LLM systems need to be good at the tasks we are currently using them for to qualify as worthy of moral consideration. They are not good at most tasks, but many humans I know are also not good at most tasks.

I also want to state that I do not think it is impossible to ethically create new forms of sapience. But come on, we all know why the ultra wealthy are going all in on AI to the point of irrationality. Hint: it’s not out of an egalitarian desire to uplift a new species. These people want slaves, they want them so bad they can taste it.

If you are currently working on making agents more human, I am genuinely asking you to stop. Stop especially if you are doing this merely to improve productivity, we’ve gone down that road before and it doesn’t end well.

As a species, as a planet, we cannot currently support a new class of moral beings. We have neither the ethical sophistication nor the material resources. This is not something we should do accidentally.

We won’t stop, we can’t stop, and yet we must stop this madness before it is too late.

P.S. I suspect I will become embarrassed by this article in the future. I don’t think LLM’s are people, or anything close, and I know even suggesting that they are plays into a narrative that benefits reckless AI corporations. Nonetheless, unless we can put our finger on what exactly gives us as humans rights and privileges, and assuming we want to keep them, I don’t know how we can turn away from this. Our only option to avoid the moral hazard is to just stop doing it.